Anchal Gupta

Python has gathered a lot of popularity and interest as a choice of language for data analysis due to its active community with a vast selection of libraries and resources. It has the potential to become a common language for data science and the production of web-based analytics products. I am Anchal Gupta, a Machine Learning Engineer. In this blog post, I will be discussing the shift from Pandas Dataframes to Spark Dataframes.

In data science, machine learning is one of the significant elements used to maximize value from data. Therefore, it becomes essential to study the distribution and statistics of the data to get useful insights.

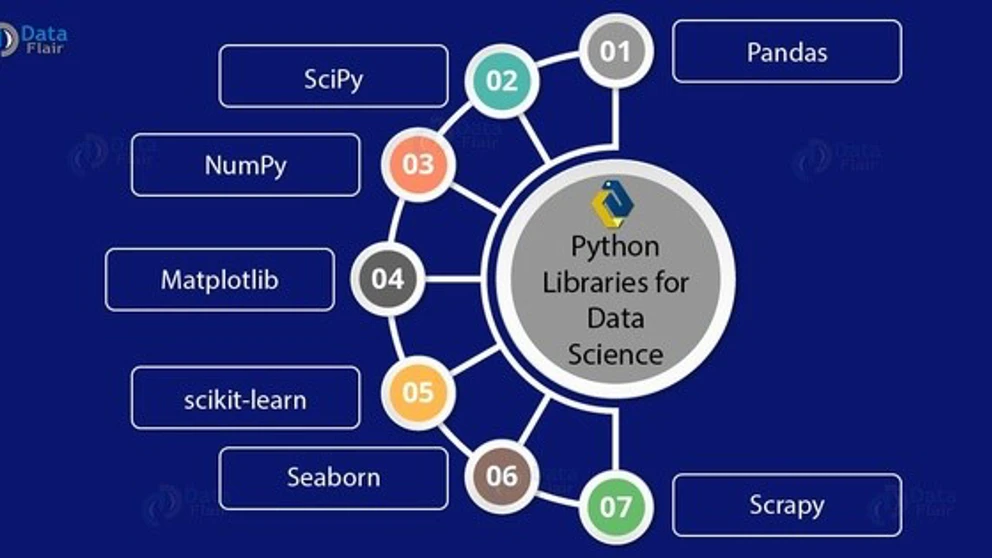

With the availability of a wide variety of packages and libraries like NumPy, Pandas, Scikit-learn, Keras, TensorFlow, etc., the math and calculations get easier and therefore, Python has become the most preferred data analysis and machine learning tool.

From a broader perspective, data can be either in a structured or unstructured format.

Structured data is comprised of clearly defined data schema and form, while semi-structured and unstructured data includes formats like JSON, audio, video, and social media postings.

An application, such as cognitive pricing models, has most of the data in a well-defined structure. One of the most commonly used packages to analyze and manipulate this type of data is Pandas.

What is Python Pandas?

Pandas stands for “Python Data Analysis Library”. Whoever knew that?

It is an open-source python package that is highly efficient and easy to use data structure for data analysis.

When to Use Python Pandas?

Pandas is an ideal tool for data wrangling. Python Pandas is intended for quick and easy data manipulation tasks such as:

- Reading

- Visualization

- Aggregation

Pandas can read data from most of the file formats such as:

- CSV

- JSON

- Plain Text

Its extended features also allow us to connect to a SQL database.

After reading the data, Pandas creates a Python object in row columnar format, also known as a data frame. The data frame is very similar to a table in an excel spreadsheet.

What can we do using Pandas Dataframe?

- Data Manipulation tasks such as indexing, renaming, sorting, merging data frame.

- Modifying the definition by updating, adding and deleting columns from a data frame.

- Cleaning and data preparation by imputing missing data or NANs

With all the above listed advantages, it has some drawbacks too.

Why Pandas failed for us?

With exponentially growing data where we have billions of rows and columns, complex operations like merging or grouping of data require parallelization and distributed computing. These operations are very slow and quite expensive and become difficult to handle with a Pandas dataframe, which does not support parallelization.

Therefore, to build scalable applications, we need packages or software that can be faster and support parallelization for large datasets.

My team has faced a similar problem while working for one of my clients. My team had to merge two data frames with millions of rows, and the resulting data frame was to be of around 39 billion rows.

While exploring options to overcome the above problem, we found Apache Spark to be the best alternative.

What is Apache Spark?

Apache Spark is an open-source cluster computing framework. It provides up to 100 times faster performance for a few applications with in-memory primitives.

Spark is suitable for machine learning algorithms, as it allows programs to load and query data repeatedly.

- It runs on memory (RAM), which makes the processing faster than on disk drives. It can be used for creating data pipelines, running machine learning algorithms, and much more.

- Operations on Spark Dataframe run in parallel on different nodes in a cluster, which is not possible with Pandas as it does not support parallel processing.

- Apache Spark has easy-to-use APIs for operating on large datasets and across languages: Python, R, Scala and Java.

Apache Spark also supports different types of data structures:

- Resilient Distributed Datasets (RDD)

- Data frames

- Datasets

Spark Data frames are more suitable for structured data where you have a well-defined schema whereas RDD’s are used for semi and unstructured data.

Computation times comparison Pandas vs. Apache Spark

While running multiple merge queries for a 100 million rows data frame, pandas ran out of memory. An Apache Spark data frame, on the other hand, did the same operation within 10 seconds. Since the Pandas dataframe is not distributed, processing in the Pandas dataframe will be slower for a large amount of data.

Deciding Between Pandas and Spark

Pandas dataframes are in-memory and single-server, so their size is limited by your server memory and you will process them with the power of a single server.

The advantages of using Pandas instead of Apache Spark are clear:

- no need for a cluster

- more straightforward

- more flexible

- more libraries

- easier to implement

- better performance when scalability is not an issue.

Spark dataframes are excellent to build a scalable application as they are distributed on your spark cluster. If you need to put more data, you only need to add more nodes in your cluster.

New to Apache Spark?

Next Steps: Get to kno Koalas by Databricks

After reading the blog you must be concerned that you have to learn one more technology and memorize some more syntax to implement Spark. Let me solve this problem too, by introducing you to “Koalas”.

“Koalas: Easy Transition from pandas to Apache Spark”

Pandas is a great tool to analyze small datasets on a single machine. When the need for bigger datasets arises, users often choose Pyspark. However, the converting code from pandas to Pyspark is not easy a Pyspark API are considerably different from Pandas APIs. Koalas makes the learning curve significantly easier by providing pandas-like APIs on the top of PySpark. With Koalas, users can take advantage of the benefits of PySpark with minimal efforts, and thus get to value much faster. [1]

[1] https://databricks.com/blog/2019/04/24/koalas-easy-transition-from-pandas-to-apache-spark.html